TL;DR

We introduce MIAM (Modality Imbalance-Aware Masking), a dynamic masking strategy that counteracts modality imbalance using modality-specific performance and learning signals. Models trained with MIAM can be evaluated under any combination of input tokens, supporting fine-grained contribution analysis across and within modalities, while improving robustness to missing data in ecological applications.

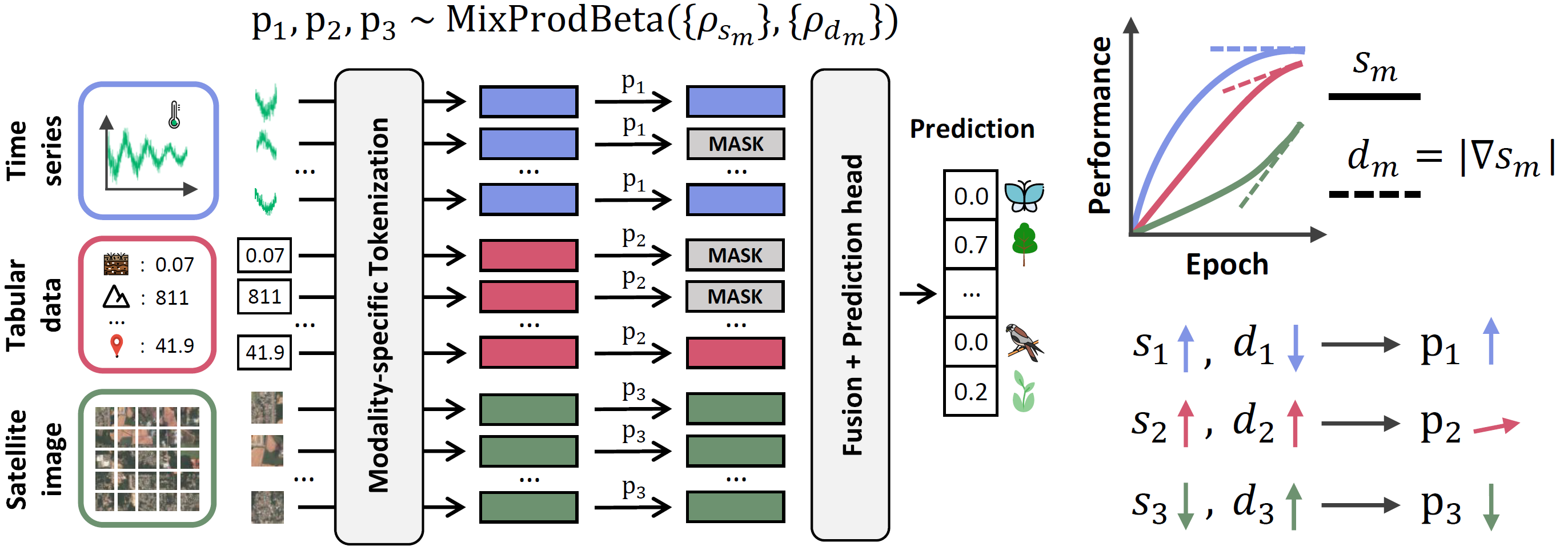

Overview of MIAM. (Left) Each token of modality m is masked with probability pm, sampled from a mixture of product beta distributions. (Right) The distribution parameters are modulated by ρsm and ρdm, derived from the per-modality performance sm and its absolute derivative dm. Modalities with strong and stable performance are masked more frequently to mitigate modality imbalance.

Introduction

Ecological modeling underpins conservation, climate adaptation, and environmental management. Modern ecological datasets are inherently multimodal, integrating heterogeneous signals such as environmental tabular variables 📊, climate time series 📈, bioacoustics 🔊, natural images 📷, and satellite imagery 🛰️.

Learning and inference in this setting are challenging because:

- inputs are often incomplete, with missing data occurring either at the modality level or within modalities;

- modalities contribute unequally, leading to modality imbalance during optimization;

- interpretability is essential, both across and within modalities.

By exposing models to incomplete inputs during training, masking strategies provide a principled approach to improving robustness to missing data while enabling structured analysis of input contributions.

Limitations of Existing Masking Strategies

However, most multimodal masking strategies are fixed and underexplore the space of possible input subsets. As a result, they only partially improve robustness and fail to explicitly address modality imbalance, where dominant modalities hinder the learning of complementary ones.

First, we formalize masking strategies as probability distributions over the unit hypercube, where each dimension corresponds to a modality. With M modalities, we define a masking probability vector p = (p1, ..., pM) ∈ [0,1]M, where pm denotes the probability of masking a token of modality m. A masking strategy is then a probability distribution 𝒟 over [0,1]M from which p is sampled during training.

Existing approaches can be interpreted within this unified framework:

MIAM 😋

MIAM provides a principled alternative built on three key properties:

- Full support: Masking probabilities are sampled over the entire hypercube, allowing any combination of masked and unmasked tokens to occur during training.

- Corner prioritization: Rather than sampling uniformly, MIAM uses a corner-anchored mixture of product beta distributions. Each mixture component concentrates probability mass near one of the 2M hypercube corners, promoting training on informative subsets (e.g., single-modality or near-complete inputs).

- Imbalance awareness: MIAM dynamically adjusts the sharpness of these beta distributions based on modality-specific learning dynamics. Modalities with high and stable unimodal performance are masked more frequently, encouraging the model to better optimize slower-learning or underutilized modalities.

The following interactive visualization illustrates how these principles translate into a dynamic masking distribution during training:

Main contributions ✨

- Dynamic, imbalance-aware masking (MIAM) that mitigates modality imbalance, promotes complementary multimodal learning, and improves robustness to missing data in ecological applications ⚖️

- Consistent improvements on ecological multimodal benchmarks (GeoPlant and TaxaBench), especially for under-optimized modalities 📊

- Fine-grained interpretability across and within modalities (variables, time segments, image patches) 🔎

For full methodological details and experimental results, read the paper 📄

Citation

@inproceedings{

zbinden2026miam,

title={MIAM: Modality Imbalance-Aware Masking for Multimodal Ecological Applications},

author={Robin Zbinden and Wesley Monteith-Finas and Gencer Sumbul and Nina van Tiel and Chiara Vanalli and Devis Tuia},

booktitle={International Conference on Learning Representations (ICLR)},

year={2026},

url={https://openreview.net/forum?id=oljjAkgZN4}

}